In Part 1 of a series examining the apparent acceleration of the universe, we looked at determining astronomical distances using parallax and standard candles like Cephied variables. Two of the required properties for something to be a good standard candle include uniformity in intrinsic brightness in space and time as well as sufficient intrinsic brightness to be seen at ever greater distances. While Cepheids are great standard candles, their lower brightness means they can’t be used out to distances that sample the universe at a scale where we might see things like acceleration. What’s need is a brighter class of objects that can also function as standard candles. So here, in the “Empire” of the series (trying to figure out how to end Part 3 with dancing teddy bears and/or magical ghosts), we examine supernovae, what they are, their properties, and how they might be used as standard candles.

Supernova are massive stellar explosions, so bright that they can outshine the galaxy in which they occur. So they satisfy one of our criteria for a standard candle – they can be seen at large distances. How about uniformity? That requires a bit more explanation. I should note up front that there are broadly two classes of supernovae, Type I (largely binary systems) and Type II (core-collapse). Since astronomers seem to love taxonomy, there are apparently as many sub-classes as objects, but we’ll stick to these two types.

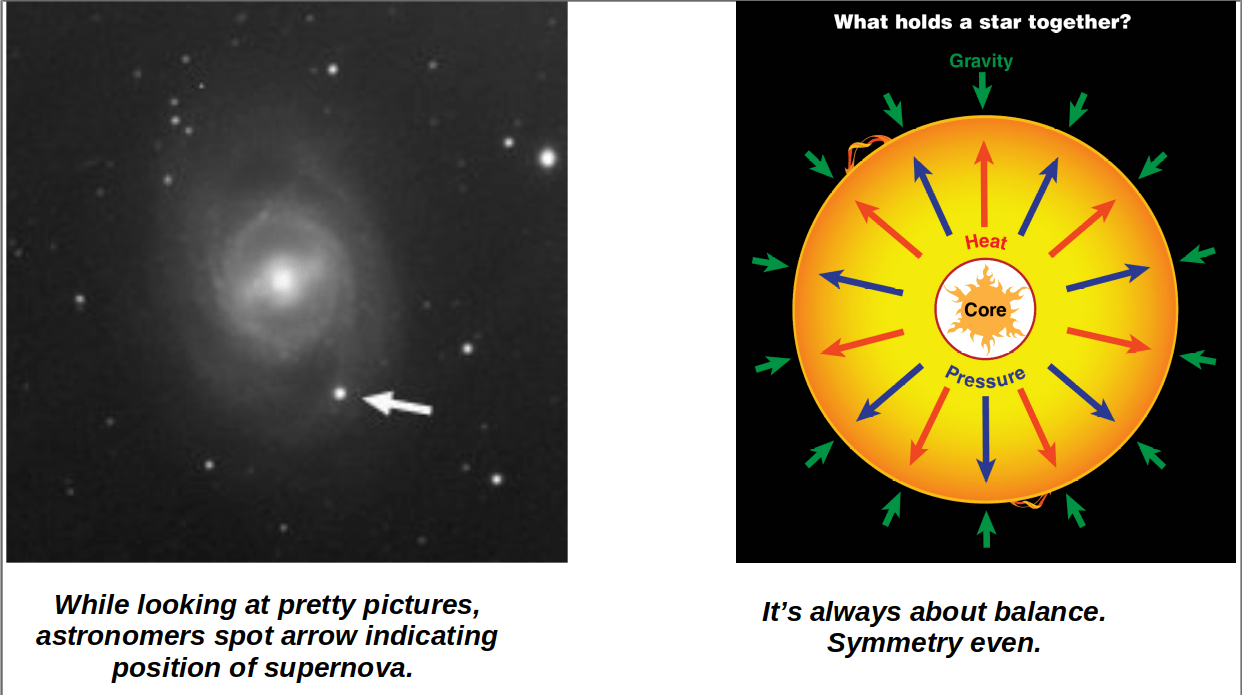

Stars are supported by nuclear fusion energy – they are constantly converting lighter elements to heavier elements in their cores and the energy produced balances the weight of the outer layers, resulting in stable objects. Stars spend most of their lives converting hydrogen to helium in their cores. This is the stable, long lived, portion of a stars life. Our sun is roughly half way through its main sequence lifetime and the stability of this phase is what has allowed the solar system to form and life to develop and evolve.

This takes a long time. Not sure it’s worth it. But if you’re going to do it, you need a stable energy source.

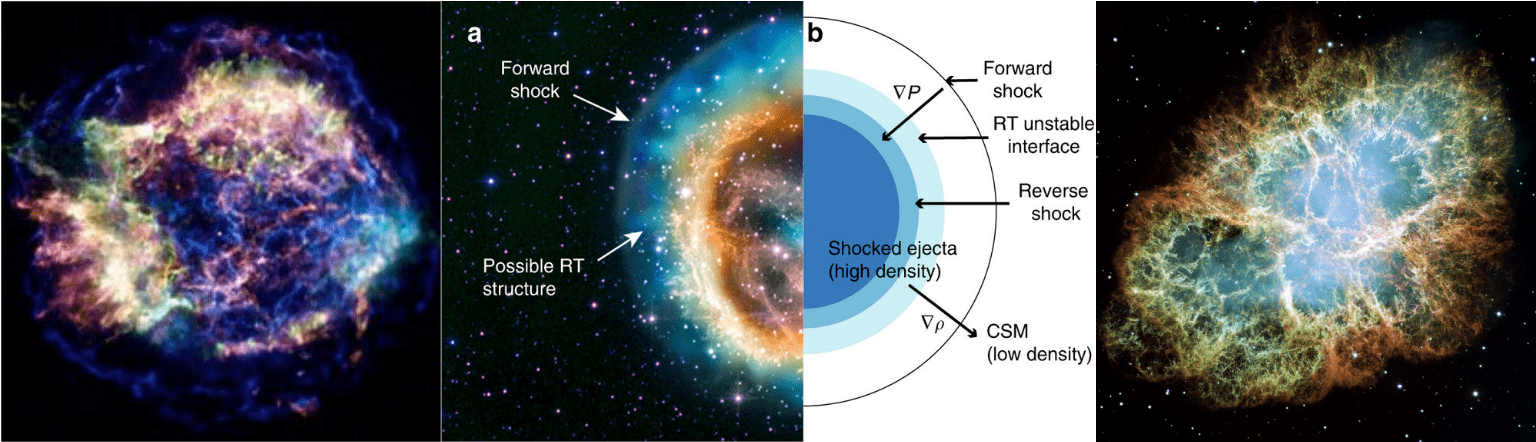

Of course, though it’s the most abundant element in the universe, the amount of hydrogen in a star is finite. As the hydrogen is used up, the core will contract, heat up and start fusing the helium ash in carbon, providing a new source of pressure support against gravity. Depending on the mass of the star, it may fuse only hydrogen before burning out (very low mass stars) or go all the way up through iron (very massive stars). Our sun will stop at fusing helium – it’s fate is a tale for a different day. But while more massive stars can continue fusing heavier elements to provide pressure support, there’s still an end point. When the core reaches iron, nuclear fusion becomes endothermic – rather than producing energy, it requires energy (a lot!), so fusion can no longer provide pressure support against gravity and the core can no longer support itself and contracts. It will reach a point where a quantum effect called electron degeneracy pressure can provide support for the upper layers and the star is once again stable. But electron degeneracy pressure can only support a core of a certain mass (roughly 1.4 times the mass of the sun, Chandrasekhar mass). In the core of a massive star, small amounts of nuclear fusion occur at the boundary between the layers, continuously depositing material onto the inert core. At some point, enough material accumulates on the core that it exceeds the magic 1.4 solar mass limit for electron pressure and it can no longer support itself so it collapses catastrophically and the outer layers come crashing in. Depending on the initial mass and other details, this is the process by which a neutron star or “stellar mass” black hole is formed. In the former case, electrons are forced into the nucleus, combine with protons and form neutrons, releasing neutrinos. The resulting neutron core is supported by neutron degeneracy pressure and stops collapsing. Of course, the outer layers don’t stop. They ‘bounce’ off the new neutron core. The huge flux of neutrinos traveling through the dense overlying layers and the bounce create a series of outward and inward traveling shock waves that completely disrupt the outer layers of the star (the core stays intact as a neutron star. Or not.) and result in the visible event. Stars that undergo this evolutionary sequence end their life as core-collapse (Type II) supernovae. Incidentally, the energy and pressure in the deflagration are such that elements heavier than iron are created – all elements beyond the iron peak are created in supernova explosions. (Takes deep hit) We are all star stuff, man. OK, OK, that was just a weak excuse to link some metal.

It may not be intuitively obvious at this point, but the process above violates our other criteria for standard candle, that of uniformity. While the core may be very similar, the visible explosion is caused by shock waves in layers that vary in mass (significantly), perhaps composition, and propagates into surrounding material of very different densities, along with many other variables. Hence, each core-collapse supernovae may have a unique brightness. So why are supernovae considered standard candles? Well, if there’s a Type II supernova, there must be a Type I supernova, right? And there is. Very crudely, Type Ia supernova occur on a bare core – what we’d call a white dwarf. These objects are the evolutionary end point of stars that only have enough mass for fusion to occur up to helium or carbon and oxygen. This is the fate of our sun incidentally; it will end its life as a carbon white dwarf, supported by electron degeneracy pressure, destined to slowly cool over billions of years.

To get to a Type 1a supernovae, we’re dealing with carbon and oxygen white dwarfs in close binary systems. With a nearby companion, the white dwarf may steal matter from its companion and hence grow in mass. As it approaches the Chandrasekhar mass of approximately 1.4 solar masses, the pressure will be enough to trigger carbon fusion. Within a matter of seconds, the white dwarf will undergo a runaway fusion reaction and the energy released in that reaction will be seen as a Type Ia supernova. Type Ia can also be formed in the merger of two white dwarfs. Here we satisfy, to some degree, the second criteria for a standard candle. The explosion always starts from the same place – a degenerate CO white dwarf with a mass of ~1.4 times that of the sun. Therefore, these explosions tend to have the same peak brightness. That is why Type Ia SN are considered standard candles and they are the objects used to measure the apparent acceleration of the universe. Parenthetically, Type II core collapse also start on roughly the same mass and composition core – they are just surrounded by a bunch of crap and that makes them much more variable in their dynamics and energy output.

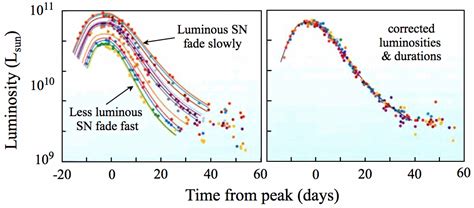

On the right, the ‘raw’ light curves of SN Type Ia. Note there’s a large dispersion in peak brightness (bad for a standard candle) and decay times. On the left, after calibration. The well defined peak brightness (good for a standard candle) results from the tight relation between decay time and peak brightness.

The neat picture presented above is, of course, not so neat; Type Ia SN still have a range of observable physical characteristics: peak brightness, ‘colors’ (brightness at different wavelengths), decay times (how long it takes the brightness to decay by a certain amount). A lot of work has gone into calibrating them so as to be able to treat them as standard candles. One of the major steps is the observation that decay time is tightly correlated with peak brightness, a relationship thought to be driven by the characteristic of the decay of radioactive nickle. So if we sweep lots of hard work under the rug, we can assume that we know how bright SN Ia are intrinsically and use them as standard candles. So the observation of an accelerating universe based on SN Ia rests directly on all the work that’s gone into calibrating them.

Can we infer the absolute hotness of women (or beefcake if that’s your thing) in a historical context simply from examination of underwear? It’s a long and hard research project, but I’m willing to sacrifice for the sake of science.

One critical point – all the calibration of SN Ia is done ‘locally’. One uses SN Ia that occur in galaxies that are near enough for Cepheids or other methods to be used to determine the distance independently. With distance no longer an unknown, the intrinsic brightness can be inferred and correlated with other observable properties. We then assume that SN Ia observed at much larger distances are ‘identical’ to local SN Ia. If we remember that the further away we look, the further back in time we are looking, we realize that this assumption means that we are assuming that the properties of SN Ia aren’t different in the distant past compared to today. The ‘technical’ term for this is ‘luminosity evolution’ – How might the luminous properties of a class of objects change with time. I should emphasize that this is not referring to how a given object changes with time, but rather how the properties of a class of objects change depending on when/where members of that class formed. If I may be permitted a crude analogy, the average height of humans has changed through out our history; hundreds of years ago (or in North Korea…) humans were on average much smaller than we are now. So if you were to look at the size of underwear from 100’s of years ago, and assumed that the average height of humans as a class was constant, you might erroneously infer that ancient humans enjoyed continuous wedgies. Similarly, if we incorrectly assume that SN Ia that form billions of years ago are the same as those we observe today, we may make erroneous inferences about their distance. Critically, the papers that argue that observations of distant SN Ia require an accelerating universe, addressed this issue as carefully as possible and suggested that luminosity evolution of SN Ia was negligible or at the very least, insufficient to explain the observations.

If that’s not foreshadowing of Part 3, I don’t know what is.

A very good series! Ilike it, and inderstand it, Yipeee!

I’ll take Yusef’s word that he both likes it and understands it, that’s good enough for me. You youngsters are gonna have to do the worrying for me.

I can comment on the underwear issue. I feel that people just over the past 100 years have expanded considerably. Take Elvis, for example. He was considered fat when he died. But technically he wore what looked like a size 36, at the most (figured from viewing one of his last jumpsuits on display at a casino.) Today a 34/36 is medium or large at best.

So I absolutely support a study of underwear over time.

Commando if necessary but don’t mess with my blueberries.

Commando for the win, My Dickies are like Kilts, FREEDOM!

I think everything except the underwear part was in one of the freshman physics presentations I gave. Now I know why I only got a B+. Needed more women’s panties.

Another great installment!

After my transgression last night, the Great Firster has punished me. The visions have stopped. The growth of the First That Will Change Everything is now stunted. I will pray for forgiveness for all our sake. I only hope that I as the First of all Firsters will still be found worthy for this task.

The arrows are there, but they don’t work yet….

The arrows to the next and previous article work for me. It’s the up and down arrows that still don’t want to cooperate.

Same here.

the TPTB are busy, they will get to it,

This is all on WordPress.

There is a strange arrow on the right hand side of the screen which shoots me back up to the top of the article. But no matching arrow that takes me to the bottom. Pretty sure this is a plot to disrupt the site hatched by crazed progressives…

Some labor and/or civil rights lawyer is going to get rich.

https://www.nationalreview.com/news/google-launches-antiracism-program-teaching-that-america-is-a-system-of-white-supremacy/?utm_source=recirc-desktop&utm_medium=article&utm_campaign=river&utm_content=native-latest&utm_term=third

I only care if it’s mandatory. Like with schoolchildren.

I take your point. I’ll only add if this has any impact on promotions or advancement in Google then it sounds like a hostile work environment.

Yeah, good point.

hundreds of years ago (or in North Korea…) humans were on average much smaller than we are now.

It wasn’t even that long ago. The average WWI soldier was 5’6″.

So if you were to look at the size of underwear from 100’s of years ago, and assumed that the average height of humans as a class was constant, you might erroneously infer that ancient humans enjoyed continuous wedgies.

I toured this museum several years back. I remember being stunned at how small some of the suits of armor were. Austrian nobility were shorter than me.

And then you realize that Lord Dunsany was six-five or so. Nobility and good diets for the win.

Not to mention all those Dutchies with the stupid amounts of dairy in their diet. Tall, tall, tall.

I’m the same size as when I was 16 y/o. Until around 30 y/o, I could easily find pants my size, 30×30. Since they have become increasingly hard to find. I have no idea exactly why people are fatter, but they certainly are. Maybe it’s because they all work at desks and then go home and play video games all day. Both work and play involve chairs, and the average diet is probably not helping either.

I fondly remember those days.

I see the opposite. In the shops back when those were a thing I would see tons of 30×30 and nothing in fatter-people sizes because those were sold out.

I made the update on the lynx thread just as this article dropped. Not sure if seen, so I’m reposting…

I just got back from Carlsbad Caverns. I took some three hundred or so pictures I have to sort through to find the good ones. Outside the visitor center I spotted a butterfly and took This picture of it. Anyone know the type? It is living in southern New Mexico.

New Mexico cave Fly, they get big down there,

Eastern Black Swallowtail.

Or maybe an Old World Swallowtail, more native to the region.

On some Brittlebush.

Great article. I’m not so sure about Elvis only being a size 36. I’m no expert but fat, bloated Elvis looked considerably larger.

He needed to do a little less conversation and more action.

Well played.

I just woke up. The shekel I placed under my pillow is missing; my dreams are realized. The arrows are back!

Is there a Zoom call tonight?

Yes. It’s in the last post.

Thanks, Copernicus. I already navigated myself there.

I’m here to help as little as possible.

It’s the least you could do, and that’s why you did it. ?

it seems to be there, but not letting me in

Love this series.

Food for thought/discussion: Why are orbits 2-D in a 3-D universe? Your precursor image is a great example.

2-D, yet all revolutions in the universe are random.

??

Inertia?

I think Uranus has one moon that orbits at a wonky angle. Or maybe Neptune, I forget which.

Yes. Uranus is wonky.

My work is done here.

Triton orbits Neptune in the opposite direction from all the other moons around Neptune. It’s very likely it was a captured Kuiper Belt object.

Neptune itself orbits kind of wonky, it’s always shown tilted compared to the rest of the planets.

Uranus’ problem is that it got knocked over at some point so its axial tilt is extreme enough its pole points at the sun. Terrible messed up planet. Also SPACE SMITH’S home planet. 0/10 do not recommend.

Yeah I think that’s what I was misremembering. I used to love this stuff as a kid but I forgot most of it.

There are a fair number of holes in that theory.

If I understand the question as why things tend to be in disks, conservation of angular momentum as collisions remove energy in e.g. the natal clouds. And orbits can be inclined if disturbed, galaxy disks warped depending on the distribution of material. And, in the disk around a white dwarf, it is 3-D, just the scale height is generally smaller than the radial dimension. But they do tend to ‘puff up’ as and flare. So to first order, 2-D simply because of angular momentum and energy conservation, but in 2nd order, definitely 3-d.

+1 Flatland

Fuck it, Ringworld…

I can feel my mind expanding. Hydocephalics look super smart to me.

I was reading A Short History of Nearly Everything for the millionth time on the plane today. Obviously this article is far more in-depth and sciency….it’s kind of a nice complement with Bryson’s historical themes.

I just heard that ERs in Oklahoma are overflowing with people who heard Kokomo at a party and poured Ivermectin in their skull to kill the ear worm. True?

You’ve got the best game of Telephone.

Parody from the before times made by Scandinavians.

https://www.youtube.com/watch?v=y9r0zm4surY

Man, that’s quite the combo of hilarious and sad, isn’t it?

https://archive.is/qEKta/e261578f2feb0e46fd225cb134986e94fabfa23b.jpg

NSFW.

https://archive.is/ez1cP/9f87003f0ac9c82b089f762f1ab03c80252bc35e.jpg

NSFW.

https://archive.is/hH0eU/5f2ecf72449c8ac320772f8a1f03ff852694ae4b.jpg

NSFW.

https://archive.is/mVjin/7943d397bbc108c359a3d888c267318bffbcd8b4.jpg

NSFW.

Perfect, Holy cow!

Why are the planets all basically in a plane? and not some in one lane and others at an angle to the plane?

Just spitballing, maybe due to inertia/gravity from formation in rotational momentum?

In planetary systems, it’s mostly because the form from the same disk of material (and the disk forms because of angular momentum). So it’s largely a common origin. some ‘planets’ are inclined; Pluto is inclined wrt to the rest of the solar systems planets. might be part of why it’s not longer considered a planet, as it didn’t form in the disk, but rather from left over material further out and was subsequently captured. Gravitational perturbations from (say a nearby passing star) can kick orbits out of the plane; but they will all tend to be in the same plane so long as they formed from the same cloud and stay that way with out some outside influence.

thanks

Are you the host? Can you let me in?

Why are the planets all basically in a plane?

Above and below the ecliptic are hundreds, nay thousands of celestial serpents. They’re deadly planet killers.

Fortunately, Jupiter’s gravity well has gotten rid of all the snakes on the plane. The other planets stay there because they know what’s good for them.

Angular momentum of the protoplanetary disk and the sun. The sun outmasses everything else in the solar system combined.

The theme music for this series. Of course

https://youtu.be/igHOaMOzzUo

And for the talk of yesterday’s sizing

https://youtu.be/-bRf0ktFCBw

Not So Deep Thoughts By Jackoff Handy:

For my right leaning friends here, please don’t reflexively support Trump 2024. I understand that he represents a collective Fuck You to the entrenched bureaucracy in America…Please keep in mind that his viewpoint is entirely reactionary. He was widely considered as mildly sympathetic to the Democrat Party’s platform until he ran as a Republican on a lark and somehow managed to win first the Republican nomination for breathing fresh air into the increasingly disillusioned Republican base, then the presidency because the Progressive Machine grew too cocksure. Had he been handpicked to lead in either party, he would have been more than compliant in increasing the expansion of the State. In a weird way, his ego staved off the authoritarians…only to see their efforts doubled to make up lost time near the end of his fortified departure.

The point being: If the right people had blown smoke up his ass, you’d hate him nearly as much as Obama.

Is he even an option?

I heard rumblings about him running again.

What would really tickle me is if he ran third party and won, abandoned all fucks, vetoed everything, then completely fractured the two party system.

This is my best case scenario for his return but I don’t see it happening. I have no faith in the man nor the system failing.

I suppose almost anyone’s an option, but I don’t think it’s realistic.

Love to see him as Speaker of the House, though. That’d be ++awse.

That was my fear. If the democrats had just swallowed their outrage, complimented him and treated him like one of the ‘in-crowd’, we’d be dealing with a bunch gun control right now. I may be wrong – I mean he appointed Gorsuch and says some of the right liberty sounding things – but I thought/think his principles are skin deep and he would have worked with the Democrats if they hadn’t gone insane.

‘I thought/think his principles are skin deep and he would have worked with the Democrats if they hadn’t gone insane.’

Exactly.

He isn’t the savior and defender of individual rights, he’s a reactionary who goes against anything that penetrates his thin skin. The combination of populist political traction and aggrandizement.

The Ego is all, principles mean nothing.

I think you’re exactly right. I’m still amazed no one on Team Blue figured this out.

Hubris and impatience is a helluva drug.

Are there any Republicans publicly pushing against the discrimination against the Americans who chose not to subject themselves to the experimental medical therapy they don’t need? Trump is not among them. Maybe Rand Paul. Maybe DeSantis. Not anybody else.

And fuck Libertarians for vaccine passports.

Rand Paul is probably the only one and even then it is more ‘oh I so owned Fauci with that soundbite!” So no..no one

DeSantis was pretty blunt about this when asked recently. His quote (often taken out of context, natch) has caused much REEEEEE-ing amont the blue check brigade.

Trump was on Gutfeld tonight saying similarly. Recommend but not force.

That’s about the best you can hope for.

Also, I apologize for not commenting on the article. I’m a fucking dunce and as much as I find the subject matter fascinating, I’m far too dumb to expand on the subject…I still enjoy these…keep them coming!

No soup for you!

Sometimes it makes no sense at all,

https://www.youtube.com/watch?v=6WBX4WK49Js&list=RD6WBX4WK49Js&start_radio=1

How cool. I don’t think I ever learned about the white dwarf smaller supernovae, only the big ones, and that they only occur in binary systems. huh. I’d think that would be too rare to use it as a base measurement for anything, but I guess with nearly infinite stars, even something relatively rare still pops up with regularity.

But all that is something I find hard to think about. I was looking up Neptune and Uranus, and read that Neptune is so far away it takes 164 YEARS to go around the sun. and it’s close to us, relatively. Like, I know that, but it blows my mind to consider the reality of it.

Maybe my best ever night of stargazing: I found out that Uranus and Neptune were near conjunction, and could be spotted with a backyard telescope. I set up my 4″ Tasco and star-hopped over to the location. Sure enough, both planets were in view. I stepped away for the telescope and looked at the location. The night was dark enough and I could finally make out the dot of Uranus. Barely visible under near-ideal conditions. It is stated that the early astronomers could have discovered Uranus but it moves so slowly that they assumed it was a fixed star.

I saw all eight major planets through that little scope. Several moons (ours, obviously) and even projected the sun onto a sheet of graph paper to check out the sunspots.

Tycho Brahe actually charted Uranus on a star chart well before Uranus was discovered. He doesn’t get credit for discovering the planet because he identified it as a star.

Good work, Putrid Meat! (That sounds so wrong…)

I’ve been think a lot about fusion and fission in nuclear weapons in light of the new job.

I found a site that does a good job of explaining it all, from the early Fat Man and Little Boy versions through hydrogen through neutron bomb through thermonuclear.

Very clear, neutral, and scientific.

Then you get to the very last section titled “What can we do?” And while a agree it’s good that nukes aren’t deployed wily-nilly through global conflicts, the arguments for disarmament they make are quite naive. Sure , I’ll put down my gun at the same moment you put down yours.

They even drag climate change into the anti nuke argument.

I am grateful they haven’t been used in anger since WW2, but I do think that mutually assured destruction works as a deterrent.

Anyway, here’s the link: https://www.ucsusa.org/resources/how-nuclear-weapons-work

I barely remember my classes on warheads during my weapons maintenance training. The funny thing that I remember the most was the instructor pointing out some of the dial-a-yield features and telling us that were didn’t see them.

I was fabulously happy when I found work on the Los Alamos laser fusion project. I truly believed that the liberation of energy was right at hand.

Short story: project gets de-funded and our hero scrambles madly for work.

My time at Rocky Flats convinced me that the idiots that I was working for were idiots and should have nothing to do with nuclear weapons.

Next thing I knew I was back at LANL making sure that “our” bombs would work when needed (short answer: they will; suck it Commies)

So I know fusion and I know fission. May the former be a reality soon and may the latter work very slowly (as in controlled) providing safe, clean, energy to our nation and God willing, never in anger.

“but I do think that mutually assured destruction works as a deterrent.”

As long as it’s not a doomsday device that the other side doesn’t know about (which they Soviets really had apparently and the Russians still do):

https://www.npr.org/templates/story/story.php?storyId=113242681

Month appropriate music

https://youtu.be/81cu3YInR7Y

well, new zoom, not sure what happened, but we’re here…

https://us06web.zoom.us/j/88071594630?pwd=a1N4WmZSQlhhVkNIbmNiMjE5TndTdz09

“White House Signals New COVID-19 Measures Coming For People Who Are Unvaccinated”

https://www.zerohedge.com/political/white-house-signals-new-covid-19-measures-coming-people-who-are-unvaccinated

What’s coming here I wonder? They just can’t bring themselves to leave it alone.

What’s coming is a political distraction from Afghanistan and Fauci’s woes.

This is pure politics and they really don’t give a shit who they hurt in the process.

Too bad dolphins lack opposable thumbs and live in the ocean. They might be the better species* to run the world.

https://www.theepochtimes.com/mkt_breakingnews/dolphins-alert-rescue-crew-to-missing-swimmer-stranded-at-sea-for-12-hours_3979724.html

*I know there are more than one.

Yay, Flipper(s)! Ummmm….any way to read the article without getting on yet another e-mail list? ?

The Epoch Times is the official mouthpiece of Falun Gong. Don’t believe your lying eyes. Dolphin is Assho! https://youtu.be/T5lTfIgJ8_M

I’m saving the article until I become a little more cogent, especially the underpants part. Mornin’ Glibbies!

Good morning, Fes! How are things in your corner of the world today?

Judi got “The Question” at work yesterday and the country’s second largest airline just went to a vax mandate. Other than breaking the will of the people, not so bad.

Sorry for not inquiring about your well being. Things alright at TB?

Things are good – thanks! A little cool at the moment, but I have just the thing for that. Reliable co-worker is back from his short vacation today, and my boss is back in the office, but the Big Boss is off to an all-day meeting offsite.

Question for you, Festus.

https://m.youtube.com/watch?v=NP9iOqdxS8c

Yikes! Haven’t heard that song for nearly 40 years. Moody Blues really was the most pretentious of the latter British Invasion. Thanks!

You say that like it’s a bad thing.

OK, often it is but MB are the bomb.

Thank you! A long-time favorite. I, too, am looking for a miracle in my life.

I see that three of the arrows are back but without the fourth musketeer, appear to be powerless. It’s a good sign!

The “back” arrow works, as does “forward” when there’s something to move forward to. Up & down don’t. They must still be collecting their unemployment.

I’m starting to think that up and down might have been on to something. Why did we spend all that time being called heroes and we’re gonna be put up against the wall first? Not like I didn’t see it coming but it was more like a tornado forming rather than the onset of winter. We’ll hold out as long as we can but really, pocket deuces are not a positional hand…

Morning, Glibs. Today I get out of this place and drive on to a state that isn’t doing stupid mask mandates.

Mornin’

I forgot to set my alarm clock.

*grumble grumble*

Sorry to hear that. I hate when that happens on a day when I need to be somewhere.

I think I made my second drink a lil too strong last night.

Good morning, U & Sean! U – will you make it all the way to The Big Hole in the Ground today, or is there another stop in between?

Today we’re stopping at the UFO museum before heading to Flagstaff. Depending on timing, we might stop at Meteor crater today. If not today, then tomorrow. It is Saturday we’re driving to the Grand Canyon proper.

Yay, M. Crater! Privately owned, you will notice.

Between tours the guides were chatting about the handguns they’d bought with visitors’ tips. I thought, “I was supposed to tip you guys?”

Also quite a few bikers (MCs) there. I don’t normally encounter that type out and about.

Maybe the tips were mentioning good deals?

No?

Heh. Maybe.

*waves*

Mornin’!

Mind officially blown by Putrid. I don’t pretend to understand it all, but it is very cool!

Dire warnings of more floods here in the NE appear to have been exaggerated just a bit. As I expected. Note to media: maybe if you stop crying wolf to generate clicks people will need the warnings when they are actually warranted.

Heed, not need.

Good morning, ‘patzie! Re: the Dire Warnings – if The Media doesn’t induce panic about the weather, whether it be snow or floods, The Customers won’t go panic-buying at The Media’s sponsor supermarkets.

No warnings here in the city – just rain all day.

Have you heard about Pluto?

They’re rebooting the Wonder Years.

I won’t be watching. Sorry, Dule.

As if the stagflation and the malaise weren’t bad enough – the seventies is giving us its preachy sitcoms, too.

Yeah, hard pass.

Alright. Went back and RTFA and I’m still ahead in the textbook. Guess I’m not so dull after all. Still going back to peruse the illustration(s) though.

Thanks for these, PM!

https://www.wgal.com/article/some-york-county-school-districts-now-accepting-mask-exemption-forms/37518900

https://www.buckscountycouriertimes.com/story/news/2021/09/03/pennridge-emails-exemption-forms-before-wolfs-mask-mandate-takes-effect/5711461001/

I like seeing the push back from school districts.

#metoo. I’m liking PA more every day.

I saw one on twits yesterday wherein the Sherriff basically told them that mandates were not settled law and stood down. The unmasked kids entered the school. Moar please!

They’re coming for your meat.

And if you take out energy, housing, and food, it’s like there’s no inflation at all!

Shit, I should get a job as a Biden flack.

That’s like living in NJ…you couldn’t pay me enough to do it.

She needed bigger boobs anyway.